The progress of the Internet of Things (IoT) and its industrial usage has been nothing short of amazing. The IoT as we know it has been aided through the help of organizations owning up the process and implementing the results to garner a more efficient system.

Since I have been associated with Big Data, Machine Learning, and Data Science over an extensive period of time, the implications in IoT and how most industrial giants need external assistance is nothing new for me. In fact, you can take a look at the IoT sphere of today through an eye as experienced as mine, and you will be able to gauge what the implication is all about it.

In this article, I have had the assistance of Taj Darra, a data scientist from Data RPM. Darra, who has had the experience of working in IBM before his current stint in Data RPM, is seemingly at the forefront of Data RPM’s mission to put an end to all implications currently experienced by firms when it comes to cognitive anomaly detection.

The market for Industrial IoT or IIoT is comprised of large firms that don’t have the technology and the time to study and match all their data for achieving a foolproof system. Here, firms like Data RPM can make an impression for themselves by giving these firms the leverage that they need in the data implications of IIoT.

Challenges in Market

The biggest reason why firms such as Data RPM and data scientists such as Taj Darra are in business is because firms face a lot of challenges in doing almost everything pertaining to data. Most of the giants that we have in industries such as car manufacturing, transportation, etc., have implemented exemplary data collection methods. These data collection methods do their job well, and hand over the necessary input to their patron organizations. Now, when the data is collected and stored off, the real challenge of analytics arises. Despite having stringent data collection and storage facilities, these firms don’t know what to do with their data and how to find actionable results.

Another challenge faced is the high volume of data coming in. With some firms gathering over 250 petabytes of data a day, the structure of the data becomes a real challenge. Moreover, the analytics need to be done in real time and to adjust to the fast pace, these giants need a dedicated unit.

It is also pertinent to mention that the technologies present in today’s market have experienced numerous changes during the last decade to become aligned with the needs of the data that is being implemented. For example, when sensors were IoT were installed on a location a decade back, we didn’t have platforms to assess multiple formats and see the data for ourselves. However, the problem of multiple formats has already partially been addressed and will eventually go away as we move into the future.

A common question that arises here is regarding the increasing use of machine learning in businesses. When businesses could have made use of other laws of physics, then why is there a tilt towards methods like machine learning? The answer to this is simply “big data.” Although we could have had manual methodologies with smaller amounts of data, big data means that we need to equip the machines to comprehend the data and understand just when an anomaly occurs.

Need for Better Methods in Anomaly Detection

The biggest problem facing businesses in today’s myopia is that only 20 percent of all problems or anomalies that occur are predicted and understood beforehand. This means that around 80 percent of the problems that businesses face are unpredicted, and the business is not prepared to handle them because of below par anomaly detection.

The traditional methods of anomaly detection are not getting the job done. Here are a few of the reasons behind why anomaly detection leaves much to be desired when it is done through the traditional methods.

- Traditional time series analysis methods try to relate a single behavioral pattern with the entire series.

- Too many anomalies happen on a regular basis. Traditional detection methods cannot keep up with the pace, and end up missing out on the opportunity.

- Anomaly detection in traditional methods occurs only as a reaction.

- Traditional Machine Language methods use sample data to train the model. This can alter the effectiveness of the results.

- Anomalies that have occurred in the past may not be a perfect indication of problems occurring in the future.

- Traditional Machine Learning methods build a single model of prediction for all entries.

- Each entity has multiple stages so they cannot be defined through a single model

- Even values that may seem normal but are different than the sequence are considered anomalies. The failure of the system to detect them can lead to problems later on.

- Anomalies are rare, so the data samples used by traditional methods to train the model may not contain signals that indicate failure.

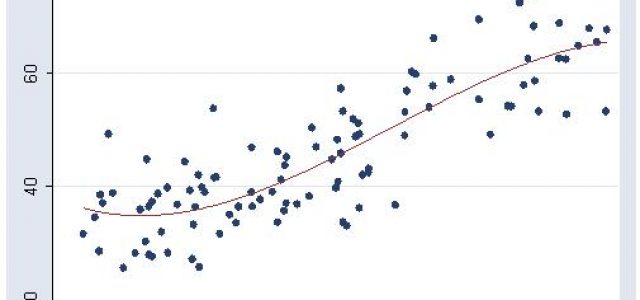

The Cognitive Anomaly detection is different from the traditional method, as it is a machine and data-first solution. The approach being followed in anomaly detection through cognitive methods is different than what we see now. The traditional methods use a top down approach, but the most advanced anomaly detection methods will use a Bottom up Approach that is different from what the industry has been doing to date.

The bottom up approach is based on several steps that aim to enhance the effectiveness of anomaly detection. These steps are:

- Analyze each and every attribute related to each and every entity in detail.

- Detect stages in each attribute.

- Establish a normal benchmark for each entity based on comparison between the attributes of the entity and those from others.

- Identify anomalies and generate a score.

- Identify a sequence anomalies based on sequences.

- Generate anomalies by combining attributes.

- Group all entities similar to one another.

Advantages of This Approach

The future for anomaly detection is seemingly bright, but to achieve a thorough consensus, we need to know how current data providers can benefit from it. Watson from IBM and Azure IOT can be considered as legos given to users looking to benefit from them. We do know that the end product will be beneficial and might even help move our endeavors in IoT forward, but what matters here is the completion of these lego sets. DataRPM jumps in here to provide users with a manual to help understand what they are up to.

Thus, the approach from these tech-giants cannot be considered as direct competition as they conflate with the bottom-up approach and walk hand in hand towards better anomaly detection.

Article by channel:

Everything you need to know about Digital Transformation

The best articles, news and events direct to your inbox

Read more articles tagged: Featured, Internet of Things