More than a year ago in January 2016, I imagined the future of Procurement technology. The origin of my idea of a Procurement Assistant you could interact with via chat came from growing interest in the development of chatbots. Most mainstream communication platforms are having one or are certainly working on one.

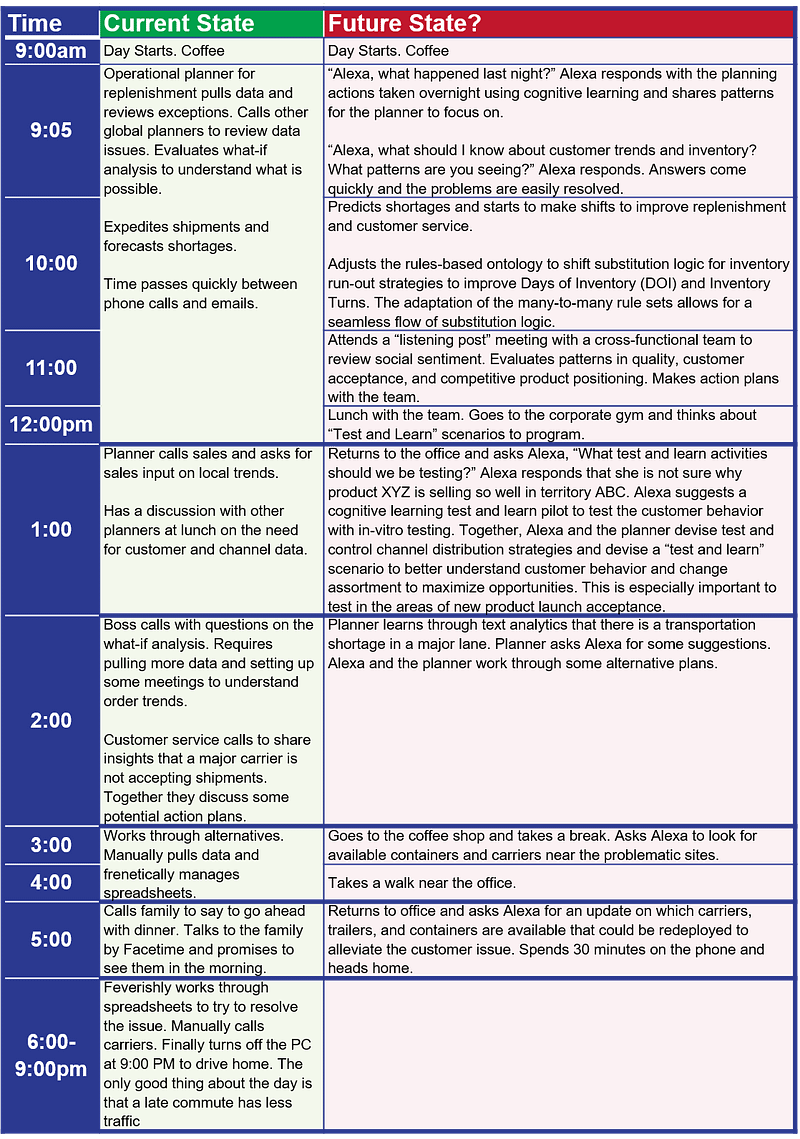

More recently in February this year, Lora Cecere, a thought-leader in supply chain, followed a similar road. She imagined what it could be to use Amazon’s Alexa as a planner.

Since January and based on more research, I matured the idea of conversational technology in Procurement a bit further. Especially regarding the use of written or spoken communication. My initial article focused on the written form and Cecere’s focused exclusively on oral communication. I believe that the real secret to success will be to use both. It is because they have their pros and cons making them fit for purpose only in certain circumstances and situations. Also, using both is a critical component of delivering an omnichannel experience to users of Procurement. It will enable them to select the mode they prefer based on their personal preferences and on the context of the interaction.

Conversational UI: a massive opportunity (for Procurement too)

Besides voice, the concept of conversational interfaces also includes written conversations. Conversational interfaces (CUI) and conversational user experience (CUX) are hot topics in the technology world. It is, in part, the result of the popularization of assistants that Siri (Apple), Cortana (Microsoft), and Google’s that increased acceptance by users. Beyond helping users in using their phone or computer, conversational UI represent a large opportunity in business; B2C and B2B to engage with people in a more effective and efficient way.

“As we move away from assistants who perform simple automated tasks, 2017 has the potential to turn voice assistant technology into a virtual customer service functionality. The convergence of chatbots and virtual voice assistants has the potential to completely change the retail experience.” The impact of voice-activated virtual assistants on retail, VentureBeat, Feb. 2017

Chat: back to the future?

One aspect of conversational UIs that I focussed on for my article from 2016, is text-based communication. The communication is not between people; it involves a conversation with artificial intelligence. It sounds like a jump in the past because discussing with a computer via text (and only text) is what command-line interfaces allowed.

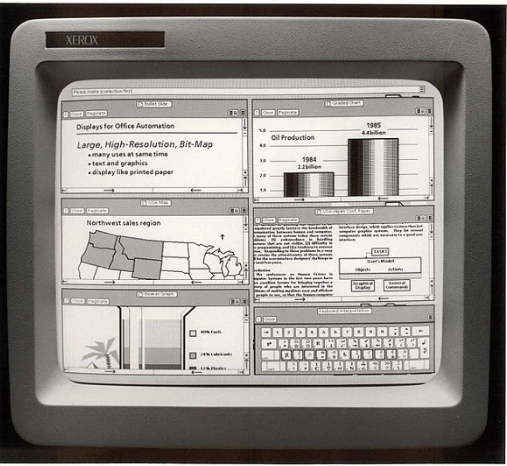

Then, Xerox’s Palo Alto Research Center introduced the first graphical user interfaces, therefore increasing usability and accessibility. Apple and then Microsoft popularized it.

So, even if Chatbots are some sort of a revival of command-line interfaces, they are very different from these distant ancestors.

Chatbots are much more intelligent and feature-rich:

- You can dialog in natural language without having to use explicit and codified instructions. Chatbots understand what you say and answer in an everyday language.

- They can perform complex tasks and take decisions autonomously.

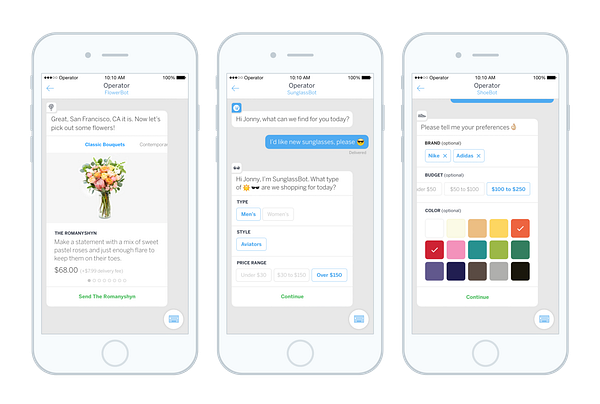

- A message can be itself a mini application giving access to rich functionalities removing the need to install and run other applications.

- They can be context aware because they remember you and all the past discussions, know where you are, and can tap into data from all other apps (emails, calendars, contacts, …).

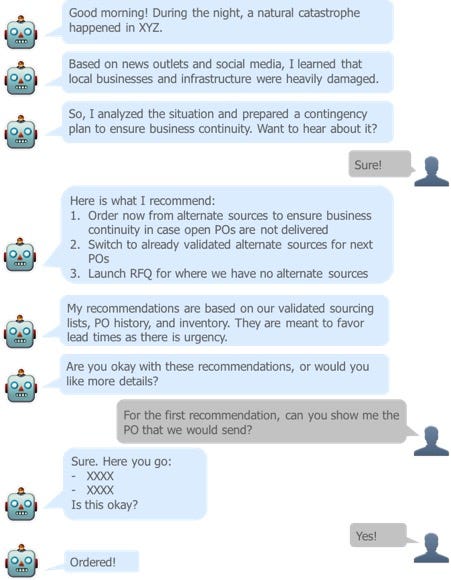

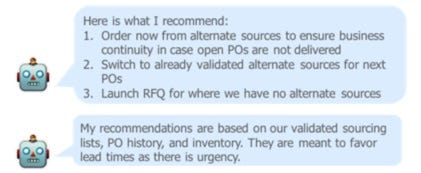

As far as Procurement is concerned, there are many use cases. Here is an example showing how the chatbot could proactively initiate a conversation after a natural disaster:

As I have already covered a couple of other use cases of chat-based conversations in Procurement, I want to focus on another very promising channel: voice.

Voice: the next user interface?

There is nothing new to spoken language. People have been using it long before they invented a written form of communication. So, to some extend, Siri (Apple), Cortana (Microsoft), and Google’s assistant are also a return to an old and more natural communication form that is possible because of the progress in technology. Besides returning to the roots of human communication, they also correspond to real use cases when compared to chat.

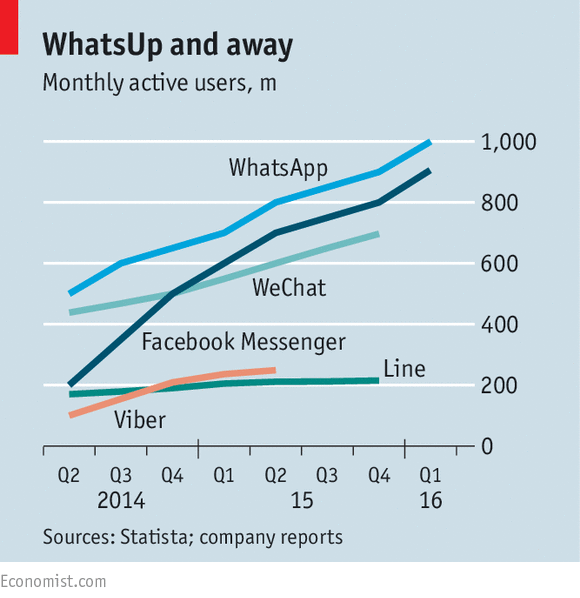

One of the benefits of interacting with computers by using oral vs. written language is to make interactions even more natural, comfortable, and fast. It is especially valuable for languages that have a complex written form. It is no wonder that many Chinese companies (Chinese has thousands of characters making using a keyboard challenging, even if there is a simplified input method for that purpose like Baidu – the Chinese Google) invest a vast amount of resources in R&D for voice-based assistants. It also explains the rapid and broad adoption by users of platforms like WeChat thanks to its hold-to-talk voice messaging (it is worth noting that WeChat is also a leading example of the use of chatbots and mini-apps in a messaging platform).

“I see speech approaching a point where it could become so reliable that you can just use it and not even think about it,” says Andrew Ng, [former VP & Chief Scientist of Baidu, Co-Chairman and Co-Founder of Coursera, and an Adjunct Professor at Stanford University]. The best technology is often invisible, and as speech recognition becomes more reliable, I hope it will disappear into the background.” Conversational Interfaces. Powerful speech technology from China’s leading Internet company makes it much easier to use a smartphone, MIT Technology Review

Interestingly enough, Chinese and other Asian languages are also very complex in their spoken forms. The fact that speech recognition is achieving such good results for complicated languages demonstrates the progress made in Natural Language Processing (NLP), a branch of AI (more on other enablers later…). Reaching a high level of accuracy is critical to ensure widespread adoption, and it is easy to envisage the application to easier languages as imminent with similar, if not better, results.

“Mr. Austin: Let’s talk about speech recognition. I believe someone in your program has said that the hope is to get to the point where it is 99% accurate. Where are you on that?

Mr. Ng: A couple of years ago, we started betting heavily on speech recognition because we felt that it was on the cusp of being so accurate that you would use it all the time. And the difference between speech recognition that is 95% accurate, which is where we were several years ago, versus 99% accuracy isn’t just an incremental improvement. It’s the difference between you barely using it, like a couple of years ago, versus you using it all the time and not even thinking about it. At Baidu we have passed the knee of that adoption curve. Over the past year, we’ve seen about 100% year-to-year growth in the daily active use of speech recognition across our assets, and we project that this will continue to grow. In a few years everyone will be using speech recognition. It will feel natural. You’ll soon forget what it was like before you could talk to computers.” How Artificial Intelligence Will Change Everything — WSJ, Interview of Baidu’s Andrew Ng in the Wall Street Journal, March 2017

Value and benefits of voice-activated assistants

“By 2020, 30% of web browsing sessions will be done without a screen.” Gartner’s Top 10 Strategic Predictions For 2017 And Beyond

Voice-activated assistants are more than just a help to use a phone or a computer. They can make business easier too. This is what Amazon realized early on, and it is why it is also investing a lot in that technology. Amazon’s Echo (the hardware) and Alexa (the AI-based assistant that lives in Echo) are examples of these efforts.

“Voice may provide the standard experience omni-channel has been looking for. I can talk to a virtual clerk in store, on my phone, via a voice-activated platform like Alexa, or through a chatbot within Messenger or within the company’s own app. This conversation could be carried across all channels, customizing my experience as I go.” The impact of voice-activated virtual assistants on retail, VentureBeat, Feb. 2017

The value proposition of this technology is about improving the customer experience. One aspect being to tailor the experience to each user thanks to all past interactions that are stored in the memory of the AI. Machines remember everything. Logging user preferences is possible because of the digitization of conversations. This point is also applicable to written communication but voice streamlines further the experience making it fully touchless, natural (typing is not natural…), and transparent; especially on mobile devices. This is why the use of voice-based assistant makes sense for Procurement too as part of improving the stakeholder / supplier / user experiences that are the cornerstone of the digital transformation of Procurement:

Procurement has to engage internal customers/stakeholders and suppliers in new ways and build omnichannel and replicable but unique experiences that fit with:

- the type of purchase,

- who purchases,

- the context of the purchase.

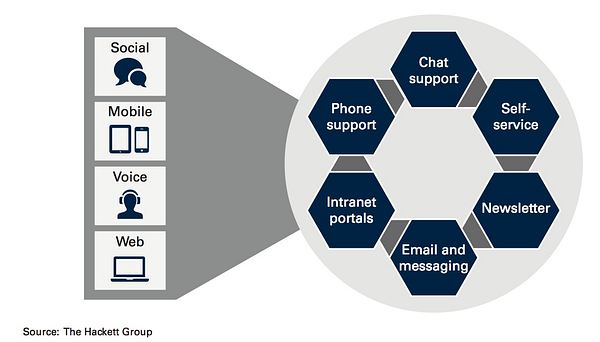

A recent report by the Hackett Group (The CPO Agenda: Keeping Pace With and Enabling Enterprise-Level Digital Transformation) confirms that and puts “improving the stakeholder experience” as priority number one for Procurement organizations. To create this omnichannel and personalized experience, the Hackett Group clearly highlights the role of “voice.”

“Delivering the customer experience must be embedded into all procurement activities. The pièce de resistance is creating better experiences for customers, being end-customers, buyers within the internal organization, suppliers, partners and your customers’ customers. It’s about creating buying experiences that meet the needs of more mature internal buyers, underpinned by seamless, straight through transactional processes. Effective procurement is all about enabling much more collaboration and innovation to take place with suppliers, providers, partners and customers and amongst them.”Procurement’s Survival Manifesto on a Knife-Edge as the As-A-Service Model takes hold, HFS Research, March 2017

Using “voice” as a channel next to the more traditional ones makes sense to create a better customer experience. It also makes sense from an efficiency point of view as illustrated by Lora Cecere in her article. In that article, she shows how using a voice-activated assistant would transform the job of planner. The same would apply to many other scenarios and not just for planners.

The use of chat – or voice-based interactions with machines is now possible because of the convergence of several factors that make it feasible and acceptable.

The first and most important one is that we all learn to communicate at a very young age which means that the learning curve to interact with applications is close to being non-existent.

Technological foundation

As I mentioned earlier, one of the primary enablers is the current state of the technology that powers chatbots.

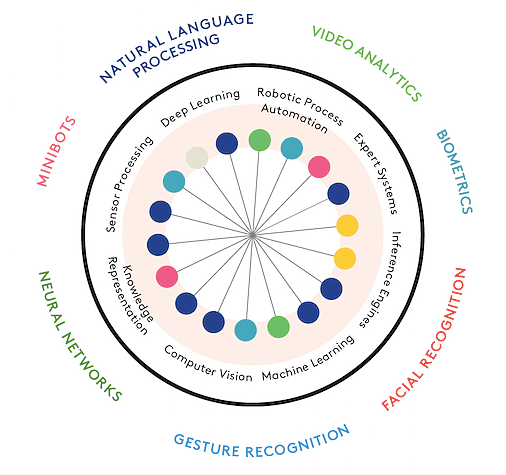

Natural Language Processing (NLP) is a branch of Artificial Intelligence (AI), and it is making enormous progress in part due to advances in another branch of AI, Machine Learning (ML).

(What’s the difference between Machine Learning, AI, and NLP?)

“Ask AI researchers what their next big target is, and they are likely to mention language. The hope is that techniques that have produced spectacular progress in voice and image recognition, among other areas, may also help computers parse and generate language more effectively”. 5 Big Predictions for Artificial Intelligence in 2017, MIT Technology Review

What is peculiar to NLP is that it relies on ML making the development of such technologies possible without requiring specialists of the language that the machine needs to understand.

“Deep Speech 2 [Baidu’s new speech recognition] is also striking because few of the researchers in the California lab where the technology was developed speak Mandarin, Cantonese, or any other variant of Chinese. The engine essentially works as a universal speech system, learning English just as well when fed enough examples”. Conversational Interfaces. Powerful speech technology from China’s leading Internet company makes it much easier to use a smartphone, MIT Technology Review

More on Baidu’s text-to-speech technology: Baidu Deep Voice explained: Part 1 — the Inference Pipeline by Dhruv Parthasarathy

Besides the technical feasibility, other important factors explain where we are now:

- the ubiquity of messaging applications

- the acceptance by the general public of chatbots.

Societal foundation

Everybody chats. Messaging apps are booming; there are approximately 23 billion texts sent every day, almost 16 million per minute! Messaging platforms and social networks profit from the explosion of mobile devices.

We are almost always on, connected to the internet, and reachable. Plus, phone manufacturers now all integrate digital assistants in their phones, exposing a large population to this type of new interactions. Also, these assistants are now finding their way to our computers. Apple and Microsoft integrate their assistant to their OS on computers. Therefore, there is an increased acceptance and usage of chat and of digital assistants that are paving the way for business applications.

The proliferation of platforms (hardware and software) may look like a drawback, but it is actually an advantage. It is because providers can develop bots (for written or spoken conversations) for each platform (SMS, Instant Messaging, Facebook Messenger, web…) to ensure that users select the channel they prefer based on context and preferences. Such an application would rely on

- one single robust back-end (like Baidu’s Deep Speech 2) in charge of understanding the language and of performing actions requested by users

- one bot per channel that would be the visible part of the iceberg and that would use the communication protocol or API of the platform.

The first and obvious problem relates to finding the right use case and not to succumb to the latest buzz and trend. Because conversational commerce represents a massive market, some companies may be tempted to create a chatbot hoping to be the first one in their market or hoping to build new markets.

“Now that Mobile is no longer the hyper-growth sector, the tech industry is casting around looking for the Next Big Thing. I suspect that voice is certainly a big thing, but we’ll have to wait a bit longer for the next platform shift.” Voice and the uncanny valley of AI, Benedict Evans

Rushing to put to market can also impact the quality of the product that is shipped which will ultimately damage the user experience and have counterproductive effects.

“Just this week, Facebook said it was “refocusing” its use of AI after its bots hit a failure rate of 70 percent, meaning bots could only get to 30 percent of requests without some sort of human intervention.

Many brands had already begun dropping their bots, saying that they didn’t do what they were supposed to. Fashion retailer Everlane, which was one of the first Facebook Messenger partners, announced last week it would no longer use it, saying they would rather stick with email. For another conversational commerce pioneer, Spring, customers have found that the bot, based on Facebook Messenger, is hard to use and doesn’t have the level of personalization people expect.” Drop it like it’s bot: Brands have cooled on chatbots, Digiday UK, March 2017

More power to the machines…

Also, relying on conversational commerce further increases the role and presence of technology and AI. It adds to the overall problematic of the future of work (in Procurement and in general) and raises questions of control, trust, and accountability. But, because conversational user interfaces are what users interact with, it is possible to design them in a way to address these challenges and fears.

Intelligent machines are more and more capable of autonomously performing complex activities. Such activities include analyzing data and making decisions. It is why there is an important question of trust in the results that the AI comes up with. And, not knowing how the machine came to an output is an obstacle to building trust. This is why researchers are working on addressing this problem by designing what they called an “explainable AI”.

“Most machine-learning systems are unable to explain in human terms why they made a decision or what they intend to do next. But researchers are working to fix that. The military’s Defense Advanced Research Projects Agency recently announced a plan to invest significantly in explainable AI, or XAI, to make machine-learning systems more correctable, predictable, and trustworthy. Armed with XAI, your digital assistant might be able to tell you it picked a certain driving route because it knows you like back roads, or that it suggested a word change so that the tone of your email would be friendlier.” What Will Artificial Intelligence Look Like After Siri and Alexa? , The Atlantic, March 2017

In addition, using conversational interfaces is a means to bring context to the AI’s recommendations, making it less of a “black box.” For example, in the conversation about an earthquake that I used as illustration earlier in this post, the chatbot highlights what it did to build its advice.

Another important question that the new collaboration between people and machines raises is about control. In a model where AI augments people, people have the final say. It is why I have only been talking about recommendations or suggestions. The chatbot always asks for validation.

The conversational paradox…

Conversational interfaces rely on our natural ability to converse. That benefit hides a paradox. It is true that we have innate and learned abilities to converse with other people. But, discussing with a computer is not something we are, for now, used to. So, the paradox is that something natural (conversations) is done with an unusual counterpart (chatbots) turning the experience into an unnatural one.

“It’s less friction to, say, set a timer or do a weight conversion with Alexa or a smart watch, as you stand in the kitchen, but more friction to remember that you can do it. You have to make a change in your mental model of how you’d achieve something” Voice and the uncanny valley of AI, Benedict Evans

Years of interacting with graphical user interfaces have left their mark. Plus, despite the massive progress made in AI, machines are not yet at the level of people to make conversations with a chatbot truly natural. It explains, in part, why people have not yet massively adopted digital assistants. It is because:

- People forget that they can do something in new ways. They must build a new habit.

- Asking something to a chatbot introduces a new question: “what words should I use to make sure I will be understood” (current mainstream assistants have a limited set of keywords or triggers they recognize).

It is nevertheless possible to address these challenges. The first aspect I want to highlight is about framing the interactions with chatbots. It is true that AI cannot yet manage any conversation. General AI is still years away. What we have today are what specialists call narrow AI, AI applied to a limited field or set of problems. In the case of conversational interfaces in a business context like Procurement, we are closer to what a narrow AI can do. It is because the discussions are somehow naturally framed. It limits the number of use cases when comparing to an all-purpose chatbot. This also limits the number of possible keywords / questions / triggers.

Also, how you introduce the assistant permits to frame the future interactions. Because of the current technological limitations, it is important not to oversell and to be realistic about the AI capabilities. On one side, technology is still perfectible. On the other hand, human language is very complex and diverse. Plus, professional use cases have their own complexities for NLP.

“Language is a tool for bias so strong, it could actually keep people out of our industry. That’s right: in the event that conversational products really take off, language could actually block linguistically diverse people from designing a technology chiefly concerned with language. And yet, language at the nuance of dialects is a kind of homogeneity our industry doesn’t broadly track. Given power of language and the explosion of conversational products, we should be considering linguistic diversity at a systemic level.” How does your bot say ‘ketchup’?, Stephanie Engle

For example, names of people or companies are often not in the same language as the language you would use with the chatbot. (I am French, and I live in Austria. So, I speak to Siri in French to maximize chances it understands me. But, as soon as I say someone’s name or an address, Siri is not able to understand me as it expects French words and not German ones…). Of course, the AI could analyze your supplier records to learn how to match them to what you say, changing the effort from “transcribing” to “matching.” Nevertheless, conversational UIs have to take into account the multi-language challenge that global business represents and exacerbates.

“My mother waited two months for her Amazon Echo to arrive. Then, she waited again — leaving it in the box until I came to help her install it.

Her forehead crinkled as I download the Alexa app on her phone. Any device that requires vocal instructions makes my mother skeptical. She has bad memories of Siri. “She could not understand me,” my mom told me.

My mother was born in the Philippines, my father in India. Both of them speak English as a third language. In the nearly 50 years they’ve lived in the United States, they’ve spoken English daily — fluently, but with distinct accents and sometimes different phrasings than a native speaker. In their experience, that means Siri, Alexa, or basically any device that uses speech technology will struggle to recognize their commands.” Voice Is the Next Big Platform, Unless You Have an Accent, Sonia Paul

So, It is important to make it clear to people that they discuss with a machine that has limitations. It is to avoid the typical overselling mistake that Apple made when it launched Siri. It introduced it by saying that people could ask anything to Siri and that Siri would understand them. People soon realized that Siri could not understand everything they said every time and that it could not do everything they wanted. This created disappointments and frustration. Passed the initial interest, usage dropped. With Alexa, Amazon took a better approach. It presented Alexa as a machine able to understand a limited set of instructions. Then, month after month enriched Alexa with new capabilities and a richer vocabulary. Amazon’s approach proved to be the right one because:

- it reduces the risks of not being understood and to have to repeat ten times what you say,

- it creates a set of first positive experiences motivating users to do more,

- it reduces the learning curve as new capabilities are introduced at a steady pace.

“People aren’t stupid. If you over promise or tell them your bot is something that it’s not, they’ll figure it out. Tell them they talk to a machine.” The Ultimate Guide to Chatbots: Why they’re disrupting UX and best practices for building, Joe Toscano

Another source of the paradox I mentioned is that graphical interfaces offer visual cues on what people can do. Menus and buttons to click define the possibilities of actions you can do. With conversations, you start with a blank canvas, and it can be overwhelming as you do not know where to start and how to ask to maximize chances to be understood and to get what you want. Here again, the design of the conversational interface matters. So, It is important to give cues to people about what is going on and what to do.

It is also possible to design interactions that take emotions into account by integrating this aspect in the development and the content of the conversations. It is because non-verbal communication must not be forgotten as it also conveys a lot of information.

“Researchers are developing artificial emotional intelligence, or emotion AI, so that our agents can better understand us, too. Companies such as Affectiva and Emotient (which was bought by Apple) have created systems that read emotions in users’ faces. IBM’s Watson can analyze text not just for emotion but for tone and, over time, for personality, according to Rob High, Watson’s chief technology officer. Eventually, AI systems will analyze a person’s voice, face, posture, words, context, and user history for a better understanding of what the user is feeling and how to respond.” What Will Artificial Intelligence Look Like After Siri and Alexa?, The Atlantic, March 2017

An emotionally aware chatbot could adapt the conversation to the personality of the user and to his state of mind. It could even decide to hand over to a real person if it feels that it would be beneficial to the conversation and the user’s goal.

All design tenets I mentioned apply to oral or written communication. Another design aspect lies in the fact that each medium has its pros and cons in conveying information. Besides the context of the conversation (in the office, in public, at home…), it is important to consider the type and volume of information to communicate. Voice, for example, is suitable for exchanging a large amount of information. It is because it takes much less time and effort to say something than to type it. It is also faster and easier to listen than to read. Except that sometimes, a picture is worth a thousand words…

“Voice is not necessarily the right UI for some tasks even if we actually did have HAL 9000, and all of these scaling problems were solved. Asking even an actual human being to rebook your flight or book a hotel over the phone is the wrong UI. You want to see the options. Buying clothes over [voice] would also be a pretty bad experience. So, perhaps one problem with voice is not just that the AI part isn’t good enough yet but that even human voice is too limited. You can solve some of this by adding a screen, as is rumored for the Amazon Echo — but then, you could also add a touch screen, and some icons for different services. You could call it a ‘Graphical User Interface’, perhaps, and make the voice part optional…” Voice and the uncanny valley of AI, Benedict Evans

As I mentioned earlier, current messaging applications offer chat features that are way better than the old text-based command line. It can include pictures, movies, sounds, and clickable elements making this channel a mix of text-based, graphical, and visual communication.

For voice, it is more complicated as it only relies on spoken words. A solution is to make sure that a screen is on the device that the user is dialoguing with (which would be the case In Procurement-related use cases, because the devices would be a phone or a computer). The screen could display information that would take too much time describing orally.

In case of an impossibility to add a display or to manage situations when a device is not immediately accessible, another solution is to transfer the discussion to a visual channel. This could be by sending an email with the details or opening an application to be a visual aid and support to the conversation.

Conversational user interfaces are, for most people, a novelty. They will for sure become mainstream. Because of more progress in AI and because of an increased acceptance.

As far as Procurement is concerned, CPOs and solution providers must consider them now and look at their potential. They will definitely change the “Procurement’s user experience” by offering a more natural way to work with and for Procurement. Chatbots will be also participating to creating the omnichannel experience that Procurement needs to develop as part of its digital transformation. They have to offer an hybrid communication channel: voice and chat to cover the user preferences and the context of interactions.

The implementation of chatbots in Procurement has to be pragmatic and realistic as there are (and always be) limitations to what AI can do vs. what people can do.

Article by channel:

Everything you need to know about Digital Transformation

The best articles, news and events direct to your inbox